Have you ever created an audio dissolve and heard an audible volume dip in the middle of the effect? Perhaps when you’re trying to join two similar pieces of fill? If it’s happened to you, you know how maddening it can be to eliminate. Final Cut offers a neat solution: two kinds of audio dissolves, one of which raises the level in the middle of the effect by 3 db. Audio editing applications typically permit even more choices.

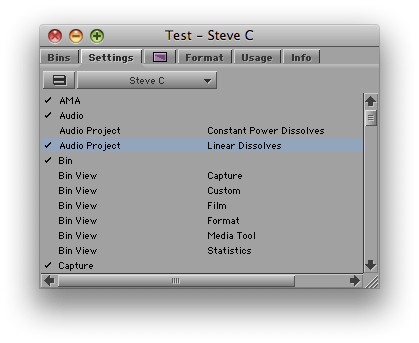

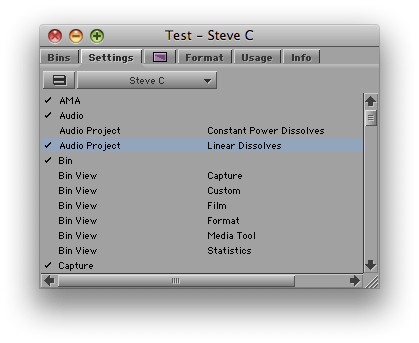

It turns out that the Media Composer offers a choice of dissolve types, too. But the feature is hidden in a setting and barely mentioned in the docs. I had thought it altered all dissolves, including the ones you’ve already made. But in fact, it affects new dissolves only; old ones are left alone. The setting is labeled “Dissolve Midpoint Attenuation.” You’ll find it in the Effects tab of the Audio Project settings panel. Similar to Final Cut, your choices are Constant Power, which adds a 3 db boost in the center of the dissolve, and Linear, which is the default.

The trouble with this implementation is that it’s hard to quickly alter an existing dissolve and compare options. And you have no indication in the timeline of the type of dissolve you’ve created. FCP allows you to change a dissolve type with a contextual menu pick, and it labels each effect in the timeline.

But while not ideal, in practice you can make the MC method work. Simply duplicate your Audio Project setting (select it and hit Command-D). Then open each setting by double-clicking, adjust one to be Constant Power and the other Linear, and name them appropriately. Once you’re created these settings, you can quickly switch between them by clicking in the area to the left of the setting name (putting a check mark there).

You probably want to let Constant Power be your default. For most dissolves, it’s more likely to produce a smooth transition. For fades, you may prefer the Linear setting.

I’m wondering whether readers here have used this feature. It was a new for me and I’m curious whether you’ve tried it and how it’s worked in practice. Please share your impressions in the comments.

Recent Comments